Through the Looking Glass with Google

J. Macgregor Wise/Arizona State University

On April 4, 2012 Google released a video announcing what they were calling Project Glass1 . Project Glass is an ongoing research project at Google’s super-secret Google X lab to produce augmented reality glasses. These are glasses that work as a smartphone. Information would be displayed on the lens of the glasses, overlaying the world in front of one. Project Glass provides a screen—television, computer, telephone—that we look both through and at, that overlays the world with what Paul Virilio once called stereo-reality2 .

Information appears on your glasses either contextually (look out the window and get a weather report, look at the subway stairs and see a warning of a shut down) or through verbal commands (cf. SIRI for the iPhone), hand gestures, eye movements, or head tilts or gestures. The glasses can provide directions and a camera. And, presumably, one could stream video content to your glasses wherever you would be, viewing the content in privacy with no one able to look over your shoulder to see what you were watching.

The video, Project Glass: One Day…, presents a day in the life of an unnamed protagonist. We never actually see him, since the video is shot ostensibly from the perspective of his glasses. But we get the sense that he is a young male, living alone. He wakes, drinks coffee, eats breakfast, arranges to meet a friend for (more) coffee, and heads out for his day3 . His glasses warn him that a subway line is closed and maps an alternate walking route for him to a bookstore. The glasses even give him a map of the store and directions to the section he is looking for (where he finds a book on learning to play the ukulele). He meets his friend. Later that day he heads up to his roof where he has a live videochat with a woman we presume is his girlfriend; he streams for her his view of the city at sunset while he plays a tune on the ukulele he just bought and learned to play. Throughout the day he has photographed graffiti and shared it with friends, set up a reminder to buy concert tickets, gotten the weather report by looking out a window, looked up the location of a friend, and streamed background music. Indeed, what we presume is the nondiegetic music playing throughout the video is turned off by him at the end when he speaks with his girlfriend.

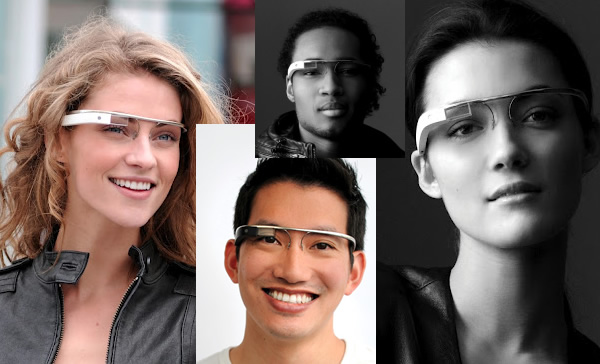

Though the video constituted the announcement of this project to the general public, bloggers and columnists4 had been hinting for months that Google was developing these glasses, and what their capabilities would be. Google executives mentioned that one of the reasons they went public with the concept video was that they were tired of only wearing them in the lab, and now could test them in public5. Indeed, a new type of sport developed: spotting Google employees wearing the glasses “in the wild.†Finally Google collaborated with designer Diane von Furstenberg in September to feature her models wearing Google Glasses at their show during fashion week.6

The parodies of the Google video were quick to come and while humorous were rather predictable. Videos showed distracted users of Google’s glasses running into things, falling down stairs, or accidentally enabling features through verbal comments or gestures, sending inappropriate messages, and so on.

The idea of Project Glass is actually not a new one. Indeed, it is the latest in a long line of developments in the area of augmented reality research. Augmented reality devices are ones that add information to daily life, and are often differentiated from virtual reality devices which completely replace the immediate environment with a simulated display. The latter could be accomplished via a virtual room (think of the holodeck on Star Trek) or VR helmets. The work of Steve Mann is germinal here.7 Mann, a self-professed cyborg, has been building his own augmented reality devices since the 1980s–using a camera to capture what is in front of him, running the information through a computer, and projecting the resulting image on his eye or eyes. Using the computer he can alter the incoming image in real time, changing colors of objects, the orientation of the world, the frame capture rate, and so on, including overlaying information, like email or other data. Mann’s mission is to take back control of our overmediated environment, creating the world as we want it to look, even deleting unsavory elements. Mann suggests, for example, deleting all billboards.

Today most augmented reality systems work through mobile handheld screens rather than head-mounted displays. Users access locationally relevant information through scanning bar codes or use of locational data. Howard Rheingold reported users doing such things a decade ago8 . In the last number of years augmented reality games have exploited the capabilities of this technology.

While some praised the innovations and possibilities of Project Glass, and others critiqued their distractions, some saw the project as representing broader cultural issues. For example, in a piece in the New York Times, conservative columnist Ross Douthat commented on the Project Glass video9 . “Even if the project itself never comes to fruition, though,†he writes, “the video deserves a life of its own, as a window into what our era promises and what it threatens to take away. If modernity’s mix of achievement and alienation was once embodied by the Man in the Gray Flannel Suit, now it’s embodied by the Man in the Google Glasses.†The Man in the Google Glasses has almost unlimited access to information at a moment’s notice, anywhere he travels. But at the same time, he lives alone in a rather bare apartment, spending much of his socialization time online (though he does meet a friend for coffee).

Project Glass is but the latest iteration of a new technological assemblage currently being mapped by research on mobile media and mobile interfaces, an assemblage I have called the Clickable World10 . As Douthat points out, when it comes to these issues there are both optimists (crowd socialization has benefits) and pessimists (we are more and more alone)11 . Too many issues get raised here to be dealt with in such a short column. But let me mention that these glasses, functioning as presented in the video, might afford further cyber-enabled cocooning—not only are we reinforcing established social networks at the expense of non-mediated serendipitous encounters (as other social media are wont to do as well), but through the glasses we may only see things of comfort and familiarity to us. I am reminded of Zaphod Beeblebrox’s Peril Sensitive Sunglasses in The Hitchhiker’s Guide to the Galaxy: at the first sign of trouble they turn completely black so you don’t see anything alarming and can carry on without panic or stress.

And then there are troubling questions of privacy and surveillance (of which Google seems aware). A tremendous data infrastructure needs to be in place to provide content appropriate information anywhere in a city, and the system needs, likewise, to track users, recording and analyzing their activities. The positive version of this assemblage is advocated for by lifeloggers and those who promote the benefits of the Quantified Self12 . For the negative version—well, let me just point out that April 4, the day Google released its video and presumably the day its Joycean hero navigates his everyday, is also the first day of Winston Smith’s diary.

April 4, 1984. Last night to the flicks. All war films. One very good one…13

Image Credits:

1. Google Project Glass

2. Project Glass Map and Route

3. Project Glass Heads Up Display

4.Project Glass Street Directions

Please feel free to comment.

- https://plus.google.com/+projectglass/posts [↩]

- Virilio, Paul. 2000. The Information Bomb. Trans. Chris Turner. NY: Verso. Cf. Jay Bolter and Richard Grusin’s ideas of transparent and hypermedia in Remediation, MIT Press, 2000. [↩]

- Significantly, we do not see him at work, or hear mention of work. The day depicted is one of leisure and consumption. [↩]

- Bilton, Nick. 21 Feb., 2012. Google to sell heads-up display glasses by year’s end. New York Times. http://bits.blogs.nytimes.com/2012/02/21/google-to-sell-terminator-style-glasses-by-years-end/ [↩]

- Carr, Austin. 30 May, 2012. Inside Google X’s Project Glass, Part I. FastCompany.com. http://www.fastcompany.com/1838801/inside-google-xs-project-glass-part-i [↩]

- https://plus.google.com/s/%23DVFthroughGlass [↩]

- Mann, Steve & Hal Niedzvieki. 2001. Cyborg. Anchor Canada. [↩]

- Rheingold, Howard. 2003. Smart Mobs: The Next Social Revolution. Cambridge, MA: Perseus Publishing. [↩]

- Douthat, Ross. 14 April, 2012. The Man with the Google Glasses. New York Times. http://www.nytimes.com/2012/04/15/opinion/sunday/douthat-the-man-with-the-google-glasses.html?_r=0 [↩]

- Wise, J. Macgregor. 2012. Attention and Assemblage in the Clickable World. In Jeremy Packer and Stephen B. Crofts Wiley (eds) Communication Matters: Materialism Approaches to Media, Mobility, and Networks. NY: Routledge. [↩]

- Douthat cites Clay Shirky as representative of the former, and Sherry Turkle of the latter. [↩]

- E.g., Bell, Gordon & Jim Gemmell. 2009. Total Recall: How the E-Memory Revolution will Change Everything. Dutton. Wolf, Gary. 28 April, 2010. The Data-Driven Life. The New York Times. http://www.nytimes.com/2010/05/02/magazine/02self-measurement-t.html?pagewanted=all [↩]

- Orwell, George. 1949. 1984. Harcourt Brace and Company [↩]

Pingback: Through the Looking Glass with GoogleJ. Macgregor Wise/Arizona State University | Flow | Screenagers.me

Pingback: Re-framing Google GlassJ. Macgregor Wise/Arizona State University | Flow

If you are waiting for the methods to get help and support in windows 10 so you may switch to this platform microsoft windows 10 help support which will provide you all those information by which you can smoothly clarify all your errors. Have a visit for once.

I really appreciate the design and layout of your website.

It is very easy to come here and visit often.

Have you hired a designer to create your look? Special job!

This is my shit from the jump somebody bout to start paying some funds asap